NIST AI RMF Implementation: A Practitioner's Guide

The NIST AI Risk Management Framework (AI RMF 1.0) is the most widely adopted voluntary standard for managing risk in AI systems. Published by the National Institute of Standards and Technology in January 2023, it provides a structured approach for organizations to identify, assess, and mitigate the risks that AI systems introduce across their full lifecycle. Implementation is not a compliance checkbox exercise. Done correctly, it builds the institutional capacity to govern AI reliably as the technology evolves.

This guide walks through NIST AI RMF implementation from a practitioner's perspective: what each function requires, where organizations typically stumble, and how to sequence the work to get results without drowning in process.

Understanding the NIST AI RMF Structure

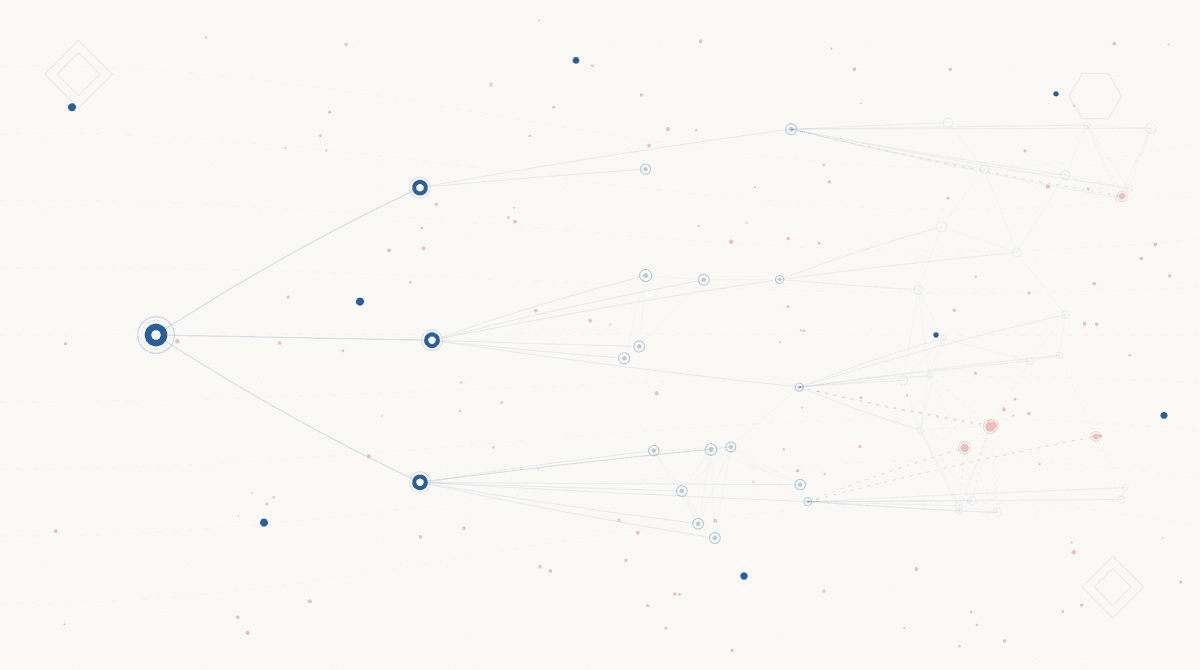

The framework has two parts. The Framing section establishes foundational concepts: what AI risk is, why it is different from traditional IT risk, and what organizational characteristics enable good risk management. The Core defines four interdependent functions: GOVERN, MAP, MEASURE, and MANAGE.

Each function contains categories and subcategories. GOVERN has six categories, MAP has five, MEASURE has four, and MANAGE has four. In total, there are 72 subcategories describing specific outcomes. The framework does not prescribe exactly how to achieve these outcomes. That is intentional. Implementation looks different for a hospital deploying a clinical decision support tool than for a bank using ML-based credit scoring. The framework provides structure; practitioners provide the judgment.

| Function | Purpose | Key Question |

|---|---|---|

| GOVERN | Establish organizational context, policies, and accountability | How are we organized to manage AI risk? |

| MAP | Identify and classify AI systems and their risk context | What AI are we using and what can go wrong? |

| MEASURE | Analyze and quantify AI risks with appropriate tools | How bad is the risk and how do we know? |

| MANAGE | Prioritize and apply risk responses; track residual risk | What are we doing about it? |

Phase 1: GOVERN

GOVERN is the foundation. It establishes the policies, roles, and cultural conditions that make everything else work. Most organizations skip straight to MAP because inventory feels more tangible, but without GOVERN, MAP activities lack organizational backing and produce findings with no clear owner.

What GOVERN Requires

The six GOVERN categories cover: organizational context and risk tolerance, accountability structures, organizational teams and culture, policies and plans, AI-specific risk management processes, and supply chain AI risk. For most organizations, the practical starting point is a clear statement of AI risk appetite and a defined accountability model.

A GOVERN foundation needs at minimum:

- An AI risk policy approved at the executive or board level

- Clear ownership for AI risk, whether a CISO, Chief AI Officer, or a cross-functional AI Governance Committee

- Documented roles for AI developers, deployers, and operators distinguishing their respective obligations

- A process for AI system onboarding that requires risk review before production deployment

- Third-party AI risk provisions incorporated into vendor contracts

Common GOVERN Obstacles

The most common failure is writing policy documents that no one owns. GOVERN outputs must be backed by real accountability. If the AI governance policy has no named owner, no review cycle, and no consequence for non-compliance, it is a shelf document. Governance without enforcement capacity is theater.

A second obstacle is treating AI risk as IT risk. AI introduces sociotechnical harms, bias, and explainability concerns that fall outside traditional security governance. The CISO or IT team should be involved, but AI governance requires business unit leadership, legal and privacy counsel, and often HR or clinical leadership depending on the use case. Build the coalition before you build the policy.

Need to build your GOVERN foundation?

Z Cyber helps organizations establish AI governance structures aligned to NIST AI RMF from policy through operational accountability.

Phase 2: MAP

MAP is where organizations get concrete. The goal is to identify every AI system in use, understand its context of deployment, and classify its risk level. This is harder than it sounds. Most enterprises have significantly more AI systems in production than their inventories reflect, particularly after the rapid expansion of generative AI tools in 2023 and 2024.

Building the AI Inventory

An accurate AI inventory is the precondition for everything in MAP. Start by defining what counts as an AI system for your organization's purposes. The NIST definition is broad: any machine-based system that can generate outputs, make predictions, or make recommendations. That includes ML models, generative AI tools, decision-support systems, and automated workflows with embedded ML components.

Discovery methods should include:

- Direct surveys of engineering, product, and operations teams

- Review of cloud service agreements and SaaS contracts for AI features

- Procurement records for any tool purchased in the last three years with ML or AI capabilities

- Shadow AI discovery (see our guide on shadow AI governance for the full methodology)

In our advisory engagements, organizations typically discover 40 to 60 percent more AI systems than their initial estimate. This is not negligence. It reflects how quickly AI capabilities have been embedded into existing tools by vendors, often without explicit disclosure.

Classifying Risk Context

For each system in inventory, MAP requires assessing the risk context: Who are the affected groups? What are the potential harms, including bias, privacy violations, and safety risks? What is the operational context, and how consequential are system outputs? The framework's risk context includes both technical risks and societal or organizational impacts.

Risk classification does not require formal quantification at this stage. MAP is about understanding the landscape, not calculating precise probabilities. A simple High, Medium, Low tiering based on impact severity and scope of affected populations is sufficient to guide prioritization in MEASURE and MANAGE.

Phase 3: MEASURE

MEASURE operationalizes risk analysis. Where MAP identifies and classifies systems, MEASURE applies metrics, evaluations, and testing to assess how well risks are understood and controlled. According to NIST, organizations need AI risk measurement approaches that are consistent, comparable, and integrated into development and deployment processes, not just one-time assessments.

What to Measure

The MEASURE function covers four areas: identifying appropriate risk measurement approaches, establishing baselines, applying metrics to track AI risk over time, and documenting uncertainty in measurement. Practically, MEASURE work includes:

- Bias and fairness testing: Evaluating model performance across demographic subgroups using appropriate statistical methods

- Robustness testing: Assessing how models perform under adversarial inputs, distribution shift, or out-of-distribution data

- Explainability assessment: Documenting whether model outputs can be interpreted and explained to affected parties in meaningful terms

- Performance monitoring: Establishing baseline metrics and tracking drift in production

- Human oversight adequacy: Evaluating whether human review mechanisms are calibrated to the risk level of system outputs

The Measurement Gap

Many organizations have more measurement capability for traditional IT systems than for AI systems. SIEM, EDR, and vulnerability scanners produce continuous data on security posture. AI risk measurement requires new tooling and new methodologies. Model monitoring platforms, bias testing libraries, and red-teaming processes often need to be built or procured.

A pragmatic approach: begin with the highest-risk systems identified in MAP. Do not attempt to build full MEASURE coverage for all systems simultaneously. Establish rigorous measurement for two or three high-risk systems, learn from that experience, and expand the methodology to lower-risk systems over subsequent quarters.

Assessing your AI governance maturity?

Z Cyber's AI Security Advisory service covers NIST AI RMF gap assessment and roadmap development.

Phase 4: MANAGE

MANAGE closes the loop. It translates risk information produced by MAP and MEASURE into prioritized actions, residual risk acceptance, and continuous monitoring. MANAGE is where AI governance produces operational outcomes rather than documentation.

Risk Response Options

NIST AI RMF recognizes four risk response options: accept, transfer, mitigate, and avoid. These map closely to traditional enterprise risk management options, but the mechanics are AI-specific:

- Accept: Document residual risk with appropriate executive sign-off. Appropriate for low-risk systems or residual risks after mitigation.

- Transfer: Shift risk through contractual mechanisms (vendor liability, insurance) or by delegating oversight to parties better positioned to manage it.

- Mitigate: Apply technical controls (guardrails, output filtering, human review), process controls (approval workflows, documentation requirements), or model changes (retraining, fine-tuning, replacement).

- Avoid: Discontinue the AI system or use case entirely when risk exceeds organizational tolerance and cannot be adequately mitigated.

Sustaining MANAGE Over Time

MANAGE is not a one-time activity. AI systems drift. Models degrade. Use cases expand beyond original scope. Threat landscapes evolve. A mature MANAGE function includes periodic reviews tied to events (model updates, significant incidents, use case changes) and calendar-based reviews for all systems regardless of changes.

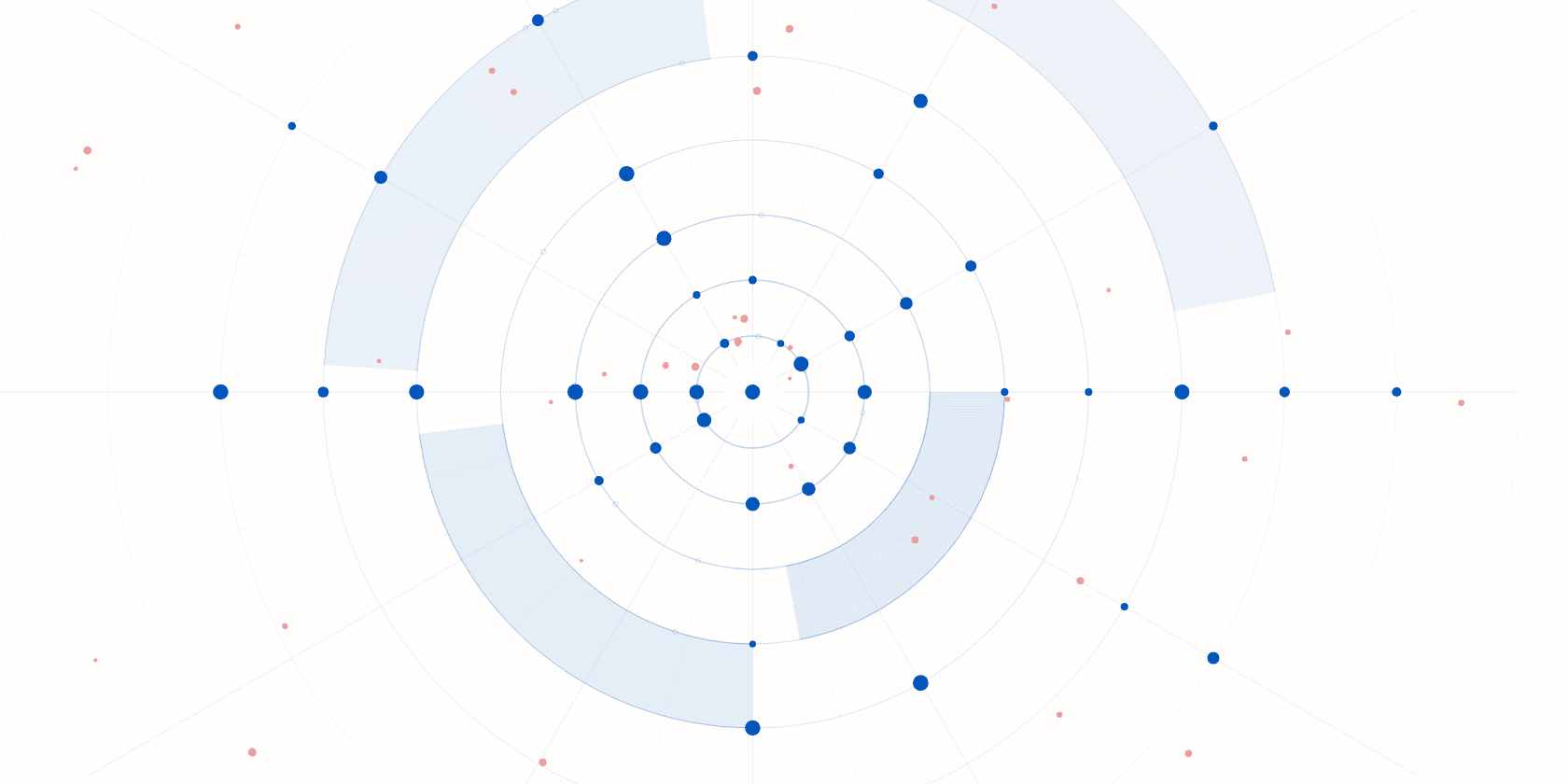

The review cadence should match risk level. High-risk systems warrant quarterly review at minimum. Medium-risk systems can operate on a semi-annual cycle. Low-risk systems require annual review and are appropriate candidates for automated monitoring with exception-based escalation.

NIST AI RMF and Adjacent Frameworks

Practitioners implementing NIST AI RMF rarely do so in isolation. Most organizations are simultaneously maintaining NIST CSF, SOC 2, ISO 27001, or HIPAA compliance programs. NIST AI RMF is designed to complement these frameworks, not replace them.

The NIST CSF 2.0 Govern function and NIST AI RMF GOVERN category address overlapping governance concerns. Organizations should align these rather than maintaining separate governance structures. The NIST AI RMF Crosswalk documents provide explicit mappings to ISO 42001, the EU AI Act, and NIST SP 800-53.

For organizations with AI governance obligations in financial services, the OCC, Federal Reserve, and CFPB have each referenced NIST AI RMF principles in recent guidance. Regulators are not mandating the framework by name in most cases, but alignment provides a defensible governance posture during examinations.

Organizations building toward HITRUST AI certification will find that NIST AI RMF implementation covers approximately 65 to 70 percent of HITRUST AI control requirements. The remaining gap focuses primarily on HITRUST's more prescriptive technical controls and its third-party assessment requirements.

Three Things to Do This Week

1. Conduct a rapid AI inventory scan. Survey three departments: IT, product, and operations. Ask each to list every AI tool or ML-enabled feature their team uses, including embedded AI in SaaS products. You will have a preliminary inventory by Friday that surfaces your highest-priority MAP work.

2. Identify your AI risk owner. If no one in your organization can answer "who owns AI risk?" you have a GOVERN gap. Name an interim owner this week, even if a formal accountability structure takes months to formalize. Risk without an owner accumulates undetected.

3. Read the NIST AI RMF Playbook. NIST published a companion Playbook with suggested actions for each subcategory. It is free, practical, and significantly more actionable than the core framework document. It will take two hours to read. Those two hours will save weeks of trying to interpret abstract framework language in implementation workshops.

Frequently Asked Questions

What is the NIST AI RMF?

The NIST AI Risk Management Framework (AI RMF 1.0) is a voluntary framework published by the National Institute of Standards and Technology in January 2023. It provides organizations with structured guidance for identifying, assessing, and managing risks associated with AI systems across their full lifecycle. The framework is organized into two parts: the Framing section (concepts and principles) and the Core (four functions: GOVERN, MAP, MEASURE, MANAGE).

How long does NIST AI RMF implementation take?

A foundational implementation typically takes 3 to 6 months for organizations starting from scratch. This includes AI inventory, risk classification, policy development, and establishing measurement baselines. Mature implementations with all four functions operationalized take 9 to 18 months. Timeline depends heavily on the number of AI systems in scope, organizational complexity, and whether a dedicated governance team is in place.

Is NIST AI RMF mandatory?

The NIST AI RMF is voluntary for most US organizations. However, federal agencies are increasingly required or strongly encouraged to align with it under executive orders and OMB guidance. Financial regulators, healthcare regulators, and defense procurement channels are also beginning to reference NIST AI RMF alignment in their expectations. Alignment provides a defensible governance posture and maps well to the EU AI Act regardless of mandatory status.

What is the difference between NIST AI RMF and NIST CSF?

NIST CSF addresses cybersecurity risk for information systems and critical infrastructure. NIST AI RMF addresses risk specific to AI systems, including bias, explainability, fairness, robustness, and sociotechnical harms in addition to security. Organizations should layer NIST AI RMF on top of their existing NIST CSF program for AI systems, not replace one with the other.

How does NIST AI RMF relate to the EU AI Act?

NIST AI RMF and the EU AI Act share significant conceptual overlap, particularly around risk classification, transparency, and human oversight. Organizations implementing NIST AI RMF are well-positioned to demonstrate EU AI Act compliance for systems deployed in or affecting EU markets, though the Act imposes additional mandatory requirements beyond what the voluntary framework covers.

What is GOVERN in the NIST AI RMF?

GOVERN is the foundational function of the NIST AI RMF. It establishes the organizational context, culture, policies, and accountability structures that make the other three functions possible. GOVERN includes setting AI risk tolerances, defining roles and responsibilities, creating policies for responsible AI use, and establishing accountability mechanisms. Without a mature GOVERN function, MAP, MEASURE, and MANAGE activities lack the organizational backing to sustain.

Ready to implement NIST AI RMF?

Z Cyber's AI Security Advisory team guides organizations through NIST AI RMF implementation from gap assessment through operational governance.

Related Resources

Frequently Asked Questions

What is the NIST AI RMF?

The NIST AI Risk Management Framework (AI RMF 1.0) is a voluntary framework published by the National Institute of Standards and Technology in January 2023. It provides organizations with structured guidance for identifying, assessing, and managing risks associated with AI systems across their full lifecycle. The framework is organized into two parts: the Framing section (concepts and principles) and the Core (four functions: GOVERN, MAP, MEASURE, MANAGE).

How long does NIST AI RMF implementation take?

A foundational NIST AI RMF implementation typically takes 3 to 6 months for organizations starting from scratch. This includes AI inventory, risk classification, policy development, and establishing measurement baselines. Mature implementations with full GOVERN, MAP, MEASURE, and MANAGE functions operationalized take 9 to 18 months. The timeline depends heavily on the number of AI systems in scope, organizational complexity, and whether a dedicated governance team is in place.

Is the NIST AI RMF mandatory?

The NIST AI RMF is voluntary for most US organizations. However, federal agencies are increasingly required or strongly encouraged to align with it under executive orders and OMB guidance. Financial regulators, healthcare regulators, and defense procurement channels are also beginning to reference NIST AI RMF alignment in their expectations. Even where not mandatory, alignment provides a defensible governance posture and maps well to the EU AI Act.

What is the difference between NIST AI RMF and NIST CSF?

NIST CSF (Cybersecurity Framework) addresses cybersecurity risk for information systems and critical infrastructure. NIST AI RMF addresses risk specific to AI systems, including bias, explainability, fairness, robustness, and sociotechnical harms in addition to security. The two frameworks complement each other: organizations should layer NIST AI RMF on top of their existing NIST CSF program for AI systems, not replace one with the other.

How does the NIST AI RMF relate to the EU AI Act?

NIST AI RMF and the EU AI Act share significant conceptual overlap, particularly around risk classification, transparency, and human oversight. NIST published Crosswalk documents mapping AI RMF subcategories to EU AI Act requirements. Organizations implementing NIST AI RMF are well-positioned to demonstrate EU AI Act compliance for systems deployed in or affecting EU markets, though the Act imposes additional mandatory requirements beyond what the voluntary framework covers.

What is GOVERN in the NIST AI RMF?

GOVERN is the foundational function of the NIST AI RMF. It establishes the organizational context, culture, policies, and accountability structures that make the other three functions (MAP, MEASURE, MANAGE) possible. GOVERN includes setting AI risk tolerances, defining roles and responsibilities, creating policies for responsible AI use, and establishing accountability mechanisms. Without a mature GOVERN function, MAP, MEASURE, and MANAGE activities lack the organizational backing to sustain.

Subscribe for Updates

Get cybersecurity insights delivered to your inbox.